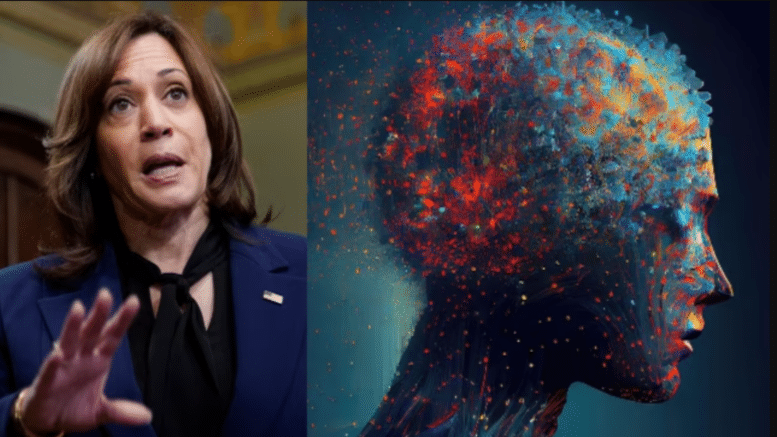

White House To Crack Down On AI, Appoints VP Kamala Harris To Lead Task Force

The White House has revealed that they are ready with a plan to regulate AI. The effort will be led by VP Kamala Harris. The idea is to get companies like Google, Microsoft, and ChatGPT’s founder OpenAI, to participate in a public review.

The White House has outlined its strategy to tighten down on the AI race, amid mounting fears that technology may disrupt society as we know it. The Biden Administration described the technology as ‘one of the most powerful’ of our time, but said, “But in order to exploit the benefits it brings, we must first limit its hazards.”

The goal is to establish 25 research institutions around the United States in order to obtain assurances from four businesses, including Google, Microsoft, and ChatGPT’s founder OpenAI, that they will ‘participate in a public review.’

Many of the world’s brightest minds have warned about the dangers of AI, specifically that it could destroy humanity if a risk assessment is not conducted immediately. Elon Musk and other tech titans are concerned that AI may soon outperform human intellect and think for itself.

This implies it would no longer require or listen to humans, giving it the ability to steal nuclear codes, cause pandemics, and trigger world conflicts.

Vice President Kamala Harris, who has the lowest popularity rating of any VP, will oversee the containment effort as ‘AI czar’ with a $140 million budget. In comparison, the Space Force has a $30 billion budget.

Harris met with officials from Google, Microsoft, and OpenAI on Thursday to explore ways to mitigate such possible hazards.

The White House said in a statement, “As we shared today with CEOs of companies at the forefront of American AI innovation, the private sector has an ethical, moral, and legal responsibility to ensure the safety and security of their products.”

“And, in order to safeguard the American people, every firm must follow current laws. I’m looking forward to the follow-through and follow-up in the coming weeks.” Each company’s AI will be evaluated this summer at a hacker event in Las Vegas to check if it adheres to the administration’s ‘AI Bill of Rights.’

The November release of the ChatGPT chatbot sparked a renewed discussion over AI and the government’s role in monitoring the technology. There are ethical and cultural problems since AI may create human-like text and phoney visuals.

These include distributing harmful content, violating data privacy, amplifying existing bias, and – Elon Musk’s favourite – destroying humanity.

“President Biden has been clear that when it comes to AI, we must place people and communities at the centre by supporting responsible innovation that serves the public good while protecting our society, security, and economy,” reads the White House announcement.

“Importantly, this means that businesses have a basic obligation to ensure the safety of their goods before they are deployed or made public.” According to the White House, the public review will be carried out by thousands of community partners and AI specialists.

Professionals in the industry will test the models to evaluate how they correspond with the principles and practises defined in the AI Bill of Rights and the AI Risk Management Framework.

Biden’s AI Bill of Rights, which was released in October 2022, lays forth a framework for how the government, technology corporations, and individuals may collaborate to create more accountable AI.

The measure has five principles: safe and effective systems, protections against algorithmic discrimination, data privacy, notice and explanation, and human alternatives, considerations, and backup.

The White House stated in October, “This framework applies to automated systems that have the potential to meaningfully impact the American public’s rights, opportunities, or access to critical resources or services.”

The White House’s plan of action comes after Musk and 1,000 other technological executives, including Apple co-founder Steve Wozniak, signed an open letter in March.

Musk is concerned that technology will evolve to the point where it will no longer require – or listen to – human intervention. It is a widely held fear that has even been acknowledged by the CEO of AI, the company that created ChatGPT, who stated earlier this month that the technology could be developed and used to commit ‘widespread’ cyberattacks.

from: https://www.technocracy.news/white-house-to-crack-down-on-ai-appoints-vp-kamala-harris-to-lead-task-force/